- Part 1 - Journey through the .NET internals - Sorting

- Part 2 - List.Sort internals

- Part 3 - Array.Sort && TrySZSort

- Part 4 - This Article

- Part 5 - Calling Conventions

Last part ended with a sneak peek of a native code. This part is going to expand on it. We are entering CLR world. But, first things first. Why do all this complicated stuff and not have TrySZSort in managed code? The answer is of course speed, let’s look into that.

Why part of the Sort is in the native code?

We can easily check how this code works in managed code. It was mentioned before but if you use custom IComparer a managed version of the code is used. I am going to use BenchmarkDotNet to check the difference. This library is excellent and beats Stopwatch based method.

Code below defines custom comparer as we need it to force managed version of the sort. Then BenchmarkDotNet is used to generate a benchmark. There are four different runs: unmanaged and managed with the size of the list from ten to ten thousand. Each list contains random integers (0-100 range).

internal class CustomIntComparer : IComparer<int>

{

public int Compare(int x, int y)

{

return x < y ? -1 : (x == y ? 0 : 1);

}

}

[MemoryDiagnoser]

public class SortTest

{

[Params(10, 100, 1000, 10000)]

public int ListSize;

private List<int> ToSort;

private List<int> ToSort1;

[GlobalSetup]

public void Setup()

{

var rnd = new Random();

ToSort = new List<int>();

ToSort1 = new List<int>();

foreach(var i in Enumerable.Range(0, ListSize))

{

var val = rnd.Next(0, 100);

ToSort.Add(val);

ToSort1.Add(val);

};

}

private void NativeSortCall()

{

ToSort.Sort();

}

private void ManagedSortCall()

{

ToSort1.Sort(new CustomIntComparer());

}

[Benchmark]

public void NativeSort() => NativeSortCall();

[Benchmark]

public void ManagedSort() => ManagedSortCall();

}

public class Program

{

public static void Main(string[] args)

{

BenchmarkRunner.Run<SortTest>();

}

}Results:

Method | ListSize | Mean

------------ |--------- |--------------:

NativeSort | 10 | 27.16 ns

ManagedSort | 10 | 116.47 ns

NativeSort | 100 | 326.75 ns

ManagedSort | 100 | 1,431.44 ns

NativeSort | 1000 | 5,450.18 ns

ManagedSort | 1000 | 27,412.47 ns

NativeSort | 10000 | 87,920.78 ns

ManagedSort | 10000 | 305,439.37 ns This test is definitely not perfect but it still shows a 4-5x difference in favour of a native unmanaged code. Perfectly written and manually tuned native code will be faster, that is the case with TrySZSort. Managed code hides manual memory handling, providing simplicity and security. It helps commoditize work as it is cheaper to write software. But like every abstraction, there is a price to pay. We give up some control and flexibility to optimize the code by ourselves. The impact of that choice depends on the workloads, as in a typical line of a business app you might not have to worry about performance price. Even if you have to, you optimize the bottlenecks, not the whole system. Sorting is a specialized problem to solve and gains a lot from native code optimizations.

EDIT:

Marc Gravell pointed out that current Compare method is not optimal.

very interesting, but also looks like an artificially slow managed compare; why not just => x - y ?

— Marc Gravell (@marcgravell) August 1, 2018

After implementation of x-y logic the results looks slightly better for ManagedSort.

Method | ListSize | Mean |

------------ |--------- |--------------:|

NativeSort | 10 | 27.26 ns |

ManagedSort | 10 | 126.69 ns |

NativeSort | 100 | 332.49 ns |

ManagedSort | 100 | 1,235.43 ns |

NativeSort | 1000 | 5,870.38 ns |

ManagedSort | 1000 | 20,316.62 ns |

NativeSort | 10000 | 89,473.91 ns |

ManagedSort | 10000 | 265,889.76 ns |For 10000 items the mean time for managed sort has dropped by around 14%.

Calling unmanaged code

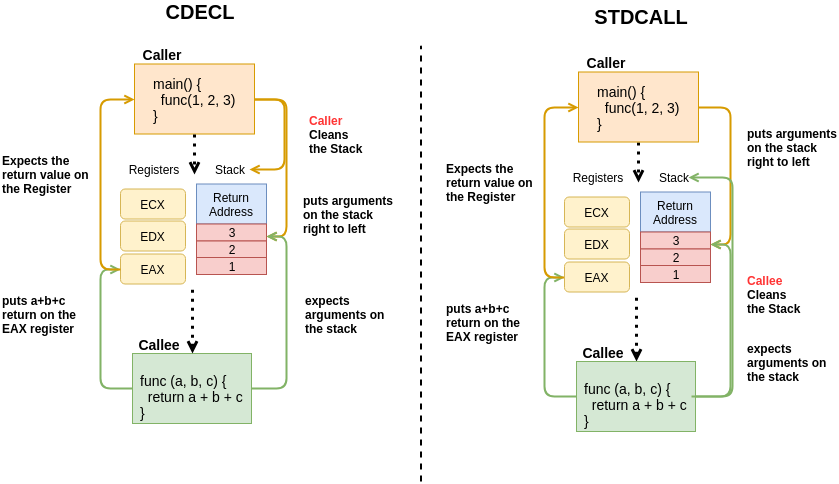

There is a special keyword extern that is used to create connection beetwen managed and native code. It is used to tell the runtime that implementation of a function is in a different (external) place. There are two ways to call external code.

P/InvokeInternalCall

P/Invoke (Platform Invocation)

Platform invocation is the mechanism provided by the

Common language runtimeto facilitate the calls from managed code to unmanaged code functions. Behind the scenes the runtime constrcuts the so-called stub, or thunk, which allows the addressing of the unmanaged function and conversion of managed argument types to the appropriate unmanaged types back. This conversion is known as parameter marshalling.1

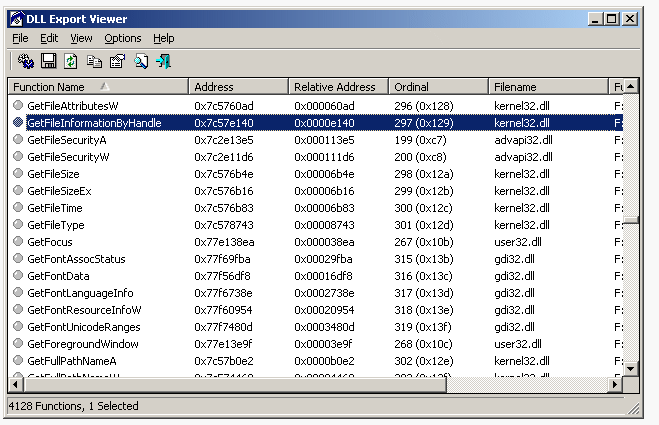

To call unmanaged code using P/Invoke, a function has to be extern and have DLLImport attribute. This attribute takes DLL file library name as a parameter. DLL files are Dynamically-linked-libraries containing compiled code and metadata about it. One of the metadata is an address table holding memory addresses to all exported functions - entry points.

[DllImport("nonexistinglib.dll")]

static extern bool Function(int i);In this example, Function is in an external DLL, it is generated to an IL code with pinvokeimpl keyword.

IL

.method private hidebysig static pinvokeimpl("nonexistingLib.dll" winapi)

bool Function (

int32 i

) cil managed preservesig

{

}Pinvokeimpl tells the runtime that this is an unmanaged method called using P/Invoke. It is available in library nonexistinglib.dll and has the calling convention winapi. More on calling conventions later in this post, but in a nutshell - calling convention describes how to call this particular function, something like contract between HTTP services. unmanaged dll expects certain contract to be met in order to accept the call to a function. winapi convention is an alias of __stdcall.

What is happening in this example with boolean function, is CLR:

- JIT’s the code (Just in time compilation)

- finds pinvokeimpl

- finds the entry point to the unmanaged function

- prepares the

contractusing winapi calling convention andmarshallsparameter int32 i (marshalling -> serialization) - calls the function

- gets

boolvalue back - uses

winapicalling convention tounmarshallthe bool value

It is actually a bit more complicated as there are things like execution context or sentinel item put on the stack frame to mark the boundary between managed and unmanaged code, but we are not going to get into these details.

A good real-life example of P/Invoke is FileStream.Read2. When Read is called in the end Win Api DLL's KERNEL32 and WIN32.ReadFile is used. This dll is part of Windows kernel.

// Stack trace of FileStream.Read

FileStream.Read

-> ReadCore

-> BeginReadCode

-> ReadFileNative

-> Win32.ReadFile

[DllImport(KERNEL32, SetLastError=true)]

unsafe internal static extern int Win32.ReadFileInternalCall

InternallCall is a different way to call an unmanaged function. It is more efficient due to InternallCalls being in the CLR context, giving a possibility to relax some time-consuming rules and save on operations around security, exception handling etc. Unfortunately it is not possible to create InternalCalls in assemblies. This call can be only be used when calling functions implemented in CLR. CLR is not only about runtime, it contains optimized code used in many places like StringBuilder.ToString(). This is one of the reasons why using StringBuilder is a good practice.

StringBuilder provides a good example of InternalCall. When ToString on StringBuilder 3 is called it is actually calling FrameAllocatedString down the stack. This function is inside CLR and uses.4

StringBuilder.ToString()

-> string.FastAllocateString

-> FrameAllocatedString

[MethodImplAttribute(MethodImplOptions.InternalCall)]

internal extern static String FastAllocatedString(int length);From the IL point of view, the code looks a bit different than in P\Invoke and uses internalcall keyword.

[MethodImpl(MethodImplOptions.InternalCall)]

static extern bool Function(int i);

IL

.method private hidebysig static

bool CallFunction (

int32 i

) cil managed internalcall

{

}internalcall - tells the runtime to look for the implementation of the code inside CLR itself. CLR maintains its own function entry point address table(example late in the post) used to find the address with the entry point. It also uses special calling convention __fastcall (more on this in next post).

It is possible to add new InternalCalls but it requires changes in CLR. You would then have to compile your customized CLR and run your code using this new runtime. Instruction on how to do this are available on the github - building5 - running6.

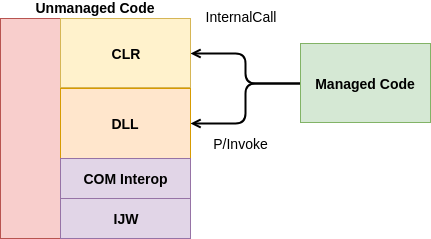

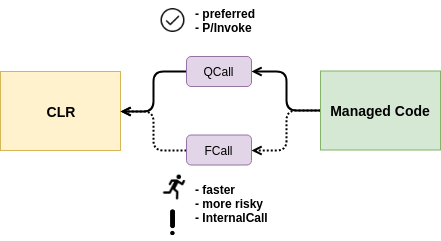

Calling CLR from managed code

We have discussed two ways of calling unmanaged code. I want to focus now on how actually CLR called. Both P/Invoke and InternalCall are translated to something slightly different within the CLR context - ECall.

ECall is a private native calling interface. This interface is inside CLR. When the managed code wants to access internal code in CLR it tells the runtime (execution engine) to use ECall to find the function and its entry point. As with previous examples, there is also calling convention and marshalling done along the way.

ECallis a set of tables to call functions within the EE (Execution Engine) from the classlibs. First we use the class name & namespace to find an array of function pointers for a class, then use the function name (& sometimes signature) to find the correct function pointer for your method. 7

There are two types of ECalls - QCall and FCall. FCall8 is more performant but more risky, much more difficult to write correctly and uses InterrnalCall. QCall is less performant but much safer and uses P/Invoke. Qcallt is mentioned as a prefered option when calling code in CLR. FCall` should only be used when there really a good reason for it.

QCallsare the preferred mechanism going forward. You should only useFCallswhen you are “forced” to. This happens when there is a common “short path” through the code that is important to optimize. This short path should not be more than a few hundred instructions, cannot allocate GC memory, take locks or throw exceptions 9

Microsoft is moving more code from FCall to managed code.

We have ported some parts of the CLR that were heavily reliant on FCalls to managed code in the past (such as Reflection and some Encoding & String operations), and we want to continue this momentum. We may port our number formatting & String comparison code to managed in the future. [^fcall-deprecation]

QCall10

- is safer but less performant

- uses

P/Invoke - default and preferred choice

Example of QCall declaration on the managed side.

[DllImport(JitHelpers.QCall, CharSet = CharSet.Unicode)]

static extern bool Bar(int flags, string inString, StringHandleOnStack retString);FCall11

- uses

InternalCall - should only be used when it is possible to make performance gains by using it

- are more performant when

Frames(HelperMethodFrame) are not used - you need to create

Frameto handle Exceptions orGC - susceptible to

GC holes12 andGC starvation - more error-prone due to manual control of

GCandFrames

Example of FCall declaration on the managed side.

[MethodImplAttribute(MethodImplOptions.InternalCall)]

private static extern bool TrySZSort(Array keys, Array items, int left, int right);I was curious what needs to be done to generate new FCALL. This commit from MS team is a great example.

- register function in a ECClass table - static table with entry points to

FCALLfunctions. This is used by jitter to find the entry points. Example: TrySZSort entrypoint - add function to the

ECFuncarray for a class that has this function. Example: TrySZSort - add extern static function with

InternalCalldecorator in managed code - use FCIMPL macro to generate function - your code needs to be inside this macro

.NET Sort -> TrySZSort uses FCall

List<T>.Sort()

---> List<T>.Sort(index = 0, count = Count, comparer=null)

------> Array.Sort<T>(_items, index, count, comparer);

---------C++ native world -------

-----------> TrySZSortTrySZSort is exposed to managed code using InternalCall, it is using FCall. On the CLR side it uses FCIMPL4 macro for function generation.

FCIMPL4(FC_BOOL_RET, ArrayHelper::TrySZSort, ArrayBase * keys, ArrayBase * items

, UINT32 left, UINT32 right)4 is a number of arguments, FC_BOOL_RET tells the macro that this function returns BOOL. Why FCIMPL macro is needed?

Since

FCALLShave to conform to theExecution Enginecalling conventions and not to C calling conventions,FCALLS, need to be declared using special macros(FCIMPL*)that implement the correct calling conventions.

It is all to do with calling conventions. It was mentioned before that calling convention is like a contract describing the way functions communicate using stack and registers but how does it work? This will be covered in the next post with a journey to the assembly code.